Spend enough time in the halls of “higher learning,” and you’ll witness a succession of fads, trends, and endless jargon. They typically have a shelf life of about five years before they are replaced by new ones. It’s always interesting to me to identify where they come from and why they’ve achieved such currency.

Nonprofit leaders and opinion-makers like to take over the ideas and jargon of their for-profit cousins, often assuming – erroneously – a seamless transition. In the world of higher education, the takeover of the corporate mindset has been like a cancer, leading in no small part to the explosion of administrative staff, as lamented by John Seery in a brilliant article he recently published.

But it’s not even primarily in the proliferation of programs and staff, most of which colleges can ill afford, that corporatization has made itself most perniciously felt. It has affected the premise of the whole enterprise itself, with schools nattering endlessly about “leadership” and the “development of skills and knowledge” that will enable students to become… well, effective consumers and producers.

The dirty not-so-secret of higher education is that most students attend not to get educated but to get credentialed, such credentialing being the sine qua non for admission into the upper class. Schools that fancy themselves as steeped in the liberal arts are uncertain how to square the demands of “learning for the sake of learning” with those of career preparation, and so they fail at them both, demeaning both the liberal and the servile arts in the process.

The corruption of the purpose of higher education can be most clearly discerned in its uncritical adaptation of corporate jargon.

I use the word “uncritical” advisedly, because for all their yapping about “critical thinking” most academic leaders have scant clue how to exercise it. “Robust” has become a current term of art, as has “inclusive,” but in this article, I want to discuss the ubiquitous “best practices.”

Like most jargon, the appeal of the term derives from its self-evident rightness. Who, after all, could oppose “best practices”? It has a positively Aristotelian ring to it. It satisfies the requirement that good thinking and sound judgment be comparative. It allows for experimentation and for the best ideas and practices to surface. What could possibly be wrong?

If all that was meant by “best practices” was that human beings have a tendency to observe what other people do, particularly those people who do something well, and then imitate those actions as nearly as they can, I’d have no problem with the term.

But in the academy, “best practices” is all too often not an expression of judgment and thinking, but a substitute for it.

It typically serves one of two purposes: when faced with challenges such as declining enrollments or sporadic fundraising, those otherwise witless bureaucrats can resort to “best practices” in an effort to make it look as if they are at least doing something without having to think about what they’re doing.

More likely, “best practices” serves as a mask to disguise an ideological objective. For example, when engaged in faculty recruitment or a variety of “student development” issues, “best practices” will aim at predetermined outcomes while taking conversation about those outcomes off the table. Let’s say you want to make it imperative that the summum bonum of academic life is increasing the representation of certain racial groups on campus, and this to the detriment of, if not exclusion of, all other considerations. All you have to do is say that a certain set of hiring guidelines are “best practices” and that you have “studies” backing up your position, and you’ve effectively limited discussion on the topic.

The main objection I have, however, is that it is simply deaf to the principle of variety and the integrity of different places. It should be obvious that what works well in one place might not work as well in another. What works at a large secular state university in the bluest of blue places – like Cal Berkeley, for example – might not work at a smaller Christian liberal arts college in a red place such as Western Michigan.

But that won’t stop the apparatchiks at that small Christian college from imitating their “superiors,” both because it is ideologically advantageous to do so and because it saves them the trouble of having to think for themselves about what might work best in this particular place. Furthermore, since many academic leaders are professional carpetbaggers, it saves them the trouble of having to engage in patient learning about the culture of a place.

It may be that in theory “best practices” are to be prudently adapted, but in practice it seldom works that way. If only there were best practices for best practices.

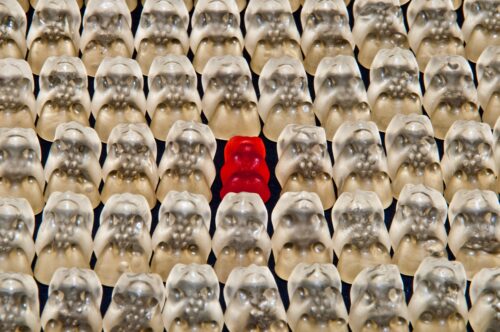

All human action aims at an end. Not all practices can be adjusted to all ends, and focusing on practices to the exclusion of ends distorts human action. “Best practices,” it turns out, are typically nothing more than tools of homogenization, and they make the landscape a more barren and less interesting place, and make nonprofits lose sight of their distinctive missions.

Photo credit: Truthout.org via Visual hunt / CC BY-NC-SA (modifications were made and mater

I suppose I am one of those mindless administrators at a public university. I do fancy myself an academic in as much as I constantly read and I do teach a lot in addition to my administrative duties. This article strikes me as a hit and run piece, we can all agree that administrators are too powerful and we bitch about the jargon. I have a boss who loves jargon and charts. The article says very little really, I have heard these complaints from professors going back to the 1980s. To quote a Randy Newman song, “they go in dumb and come out dumb too”. This as always been the case, it is always shocking at how few college graduates are actually “educated”. Plato wrote something about this.

I don’t disagree, but there’s only so much you can do in a 600-word Post. I’ve written about this more thoroughly in other venues, addressing some of the issues you raise.

Agreed, but this article and the discussion it addresses could well use greater depth. The corporatization of higher ed began with Andrew Carnegie over a century ago, whose Endowment for Higher Ed advocated bringing businessmen onto Boards, to introduce greater practical rigor. They introduced such ideas as the “credit hour” so they could measure, by quantifying, student accomplishment and progress toward degrees. That began the slippery slope. Moreover, liberal ed classically conceived was not learning for the sake of itself, but for students’ self-development—strengthening and refining what it is to be human—and it was practical—studying rhetoric to become a persuasive speaker and writer, etc. Higher ed today is skating over the surface of its recent degraded forms, and discussions of it need to delve much deeper, historically and philosophically.